Imagine waking up to find a sleek, black box on your desk labeled “AI.” It promises speed, pattern-spotting, and answers at the click of a button – an object of captivation, capable of dazzling demonstrations. But place that box on a crowded desk without electricity, a clear brief, or anyone who knows how to plug it in, and it becomes little more than a polished ornament.

AI is an extraordinary set of tools: powerful models, advanced automation, and new ways to forecast and personalize. Yet tools do not set strategy, decide trade-offs, or carry institutional memory. They amplify the inputs and structures around them. When data is sparse or biased, when incentives reward the wrong behaviors, when customers can’t interpret or trust algorithmic decisions, AI’s advantages flatten into missed opportunities or unintended consequences.

This article unpacks why AI alone won’t save your business. We’ll explore the constraints – from data quality and governance to culture and operational integration – and show how AI must be paired with clear strategy, human judgment, and durable processes to produce real, lasting value. If you hoped AI would be a shortcut, prepare rather for a careful journey: one where technology is only part of the map.

Why AI Is a Tool Not a Strategy: Align Investments with Clear Business Outcomes

AI behaves like a magnifying glass on whatever strategy you already have: it accelerates processes, surfaces hidden patterns and automates repetitive decisions, but it cannot invent a north star. Investments that lack a target become expensive pilots rather than durable advantages. Commit to measurable outcomes, build clear governance around model use, and plan for change management so models deliver predictable business value instead of sporadic hype.

Treat projects as tools in a toolkit – chosen for the job, traced to a metric, and retired if they fail to deliver. Practical priorities look simple in practice:

- Define the outcome: map the metric that must move (revenue, cost, time-to-decision).

- Start small, prove value: pilot on a high-impact use case with swift feedback loops.

- Measure and iterate: continuous evaluation beats one-off deployments.

- Invest in people & data: models are only as good as the data and teams behind them.

| Investment Focus | Expected Outcome |

|---|---|

| customer service automation | Faster responses, lower support cost |

| Sales enablement | Higher conversion, better-qualified leads |

| data & infrastructure | Reusable pipelines, faster experiments |

People and Processes First: Train Teams, Redesign Workflows and Champion Change

AI tools spark possibilities, but value emerges when people learn to wield them. Invest in role-based training, peer learning circles and scenario-driven labs so staff move from curiosity to competence. Create clear learning paths that tie new skills to everyday tasks, and reward experimentation with low-risk pilot projects; these turns knowledge into operational muscle. Build a culture of continuous feedback where mistakes are diagnostics, not punishments, and front-line voices shape how automation is applied.

- Map skills: identify gaps against future workflows

- Pilot fast: short experiments with defined success criteria

- Govern: simple rules to protect data and decisions

- Scale: propagate wins via documented playbooks

| Priority | Timeframe | Owner |

|---|---|---|

| Upskilling | 3 months | HR + Line Leads |

| Workflow Redesign | 6-12 weeks | Process team |

| Change Sponsorship | Ongoing | Executive Sponsor |

tools must slot into redesigned processes and visible accountability if benefits are to stick. Assign change champions across teams, simplify handoffs, and bake monitoring into new flows so you can measure impact and iterate. When leaders model adoption and celebrate small wins, resistance fades and the association adapts-turning AI from a novelty into a reliable capability that amplifies human judgement rather than replacing it.

Data Readiness and Governance: Clean, Curate and Protect the Information AI Depends On

Think of machine learning models as high-performance instruments: they only sing when fed the right notes. Without quality inputs, predictions wobble, decisions misfire and stakeholders lose trust. Investing in metadata, lineage and strong access controls is not an IT chore – it’s the foundation of reliable outcomes. Cleanse pipelines, enforce schemas and capture provenance so teams can trace answers back to their source rather of making blind bets on shiny algorithms.

- Audit & Catalog: Know what you have and where it lives.

- Standardize: Common formats and taxonomies reduce friction.

- automate Cleansing: Remove bias, duplicates and drift at scale.

- Assign Ownership: Clear stewards keep data healthy.

- Secure & Monitor: Encryption, logging and anomaly detection protect value.

| Maturity | Immediate Focus |

|---|---|

| Emerging | Inventory & basic cleansing |

| Managed | Governance, lineage, access controls |

| Optimized | Automation, monitoring, continual enhancement |

When data is treated as a product, AI becomes an amplifier rather than an illusion. The real returns come from predictable, auditable pipelines and a culture that values stewardship over shortcuts. prioritize governance so models amplify insight instead of propagating error – because without a solid information foundation,even the most advanced algorithms are just expensive guesswork.

Integration Over Isolation: Connect AI to Systems, Metrics and Daily Operations

AI models are only as useful as the connections that feed and learn from them – they must be braided into the company’s existing systems rather than left as standalone novelties. Integration is the multiplier: when data pipelines, CRMs, and production systems share context with your models, insights become actions rather of curiosities. Consider where AI must touch your stack to drive value:

- Customer records and CRM events - to personalize and prioritize outreach

- Billing and inventory systems – to enable real-time recommendations and prevent oversell

- monitoring and logging pipelines – to detect model drift and operational anomalies

- Reporting dashboards – to make predictions part of everyday decisions

Turning AI into reliable operational muscle means tying it to the metrics and routines people actually use. Build closed feedback loops that translate model predictions into measurable outcomes and back again for continuous learning. Make operational ownership explicit: assign clear human roles for model validation,exception handling,and cadence of retraining,and embed success criteria into daily workflows:

- Define KPI triggers that cause automated actions or human review

- Log decisions and outcomes so models can be audited and improved

- Automate low-risk actions,escalate uncertain cases to humans

- Train teams on interpreting model outputs and adjusting thresholds

Risk Ethics and Compliance: Build Guardrails,Audit Trails and Transparent Decision Paths

AI can generate insights, but it can’t inherit your ethics or compliance instincts by itself. Treat models as tools that must operate inside well-defined boundaries: the technical will needs to be married to the procedural. Put simply, outputs without context are risk. Implement a few non-negotiables to avoid surprises:

- Policy guardrails: clear, versioned rules that define acceptable use.

- Auditable logs: immutable traces of inputs, decisions and outcomes.

- Explainability features: accessible rationales for model outputs.

- Human-in-the-loop triggers: escalation points for ambiguous or sensitive cases.

- Continuous reviews: scheduled checks for drift, bias and regulatory change.

These building blocks convert opaque model behavior into accountable processes that stakeholders can trust.

Turning theory into practice requires more than checklists – it needs cross-functional rituals and visible decision maps so everyone can see why a choice was made and who owns it. make compliance a living part of delivery, not a post-hoc audit:

- ownership mapped to outcomes: teams responsible for specific risks and remediations.

- Published decision paths: diagrams and logs that explain end-to-end choices.

- Snapshot audits: automated captures of system state at key moments.

- Closed feedback loops: user reports feeding model and policy updates.

When guardrails, trails and transparent decision paths are embedded into everyday workflows, AI shifts from being an inscrutable expense to a governed accelerator of business value.

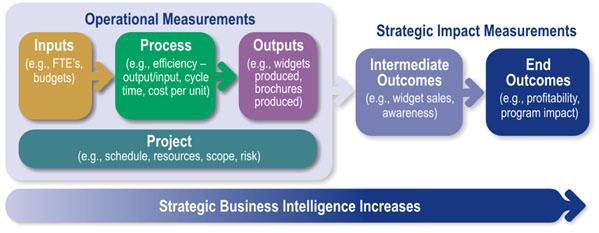

Measure Real Value Not Vanity Metrics: Use Outcome Based KPIs and Continuous Experimentation

Stop celebrating surface signals and start rewarding outcomes that actually move the business needle. Instead of counting impressions, clicks, or model accuracy in isolation, tie every AI initiative to a clear business result – higher retention, faster time-to-value, lower cost-to-serve, or increased lifetime revenue. Build KPIs that ask, “Did this change improve a customer’s experience or the unit economics?” and instrument experiments so you can attribute shifts directly to the interventions.Continuous experimentation means treating every deployment as a hypothesis: short cycles, measurable effects, and ruthless pruning of features that look clever but don’t deliver when cash and attention are at stake.

- Outcome KPIs: retention rate, revenue per customer, churn reduction

- Experiment signals: lift, confidence interval, time-to-impact

- Cost metrics: cost-per-resolution, operational savings, ROI per model

Make experimentation part of your operating rhythm: run small, instrumented tests, review results with cross-functional stakeholders, and iterate on what moves the outcome metrics – not the dashboards. Create a lightweight governance model that prioritizes experiments with the highest expected business value, then scale winners. A few well-designed,outcome-driven tests will teach you more about practical AI impact than a dozen vanity dashboards ever will.

| Vanity Metric | Outcome KPI |

|---|---|

| Impressions | Incremental conversions / revenue |

| Model accuracy | Reduction in manual reviews / cost |

| Daily active users | Customer lifetime value |

Scale with Discipline: Start Small, Iterate Fast and Invest in Operations and Executive Sponsorship

Treat adoption like a sequence of thoughtful experiments rather than a one-time deployment. Launch compact pilots that target a single, high-value workflow, define clear hypotheses and measurable outcomes, and build short feedback loops so you can learn in days, not quarters. By focusing on small, repeatable wins you create a culture of evidence-based decisions – where teams celebrate validated learnings and retire ideas that don’t move the needle. Start with real user impact, instrument everything you can measure, and make data the compass for every next step.

- Choose one workflow to optimize first

- Set clear KPIs and success criteria

- Run rapid iterations with cross-functional teams

- Invest in ops to scale the reliable parts

Scaling responsibly means funding the invisible work: robust operations, observability, and governance that keep models reliable and compliant as they touch more users. Executive backing accelerates adoption by aligning priorities,clearing budgetary and organizational friction,and championing outcomes when trade-offs are required. Treat operational capacity and senior sponsorship as first-class investments – they turn isolated proofs into resilient capabilities and let you iterate fast without breaking things.

Future Outlook

AI is powerful, but it’s a tool, not a takeover. Left alone it’s a shining engine with no map: it needs clean data, clear strategy, ethical guardrails, and people who can steer, interpret and adapt. The businesses that thrive will be those that pair machine speed with human judgment, continuous learning, and organizational craft – treating AI as a co-pilot, not a captain.If you build the systems and culture around it, AI will amplify what you already do well; on its own, it’s only the begining of the journey, not the destination.