Once a startup mantra and a Silicon Valley badge of honor, “fail fast” promised a brisk, elegant shortcut through uncertainty: discover what doesn’t work, discard it, move on. The phrase captured a culture’s hunger for speed and iteration, a pragmatic antidote to paralysis and overplanning. But as that mentality migrated from tech incubators into hospitals, schools, finance, and government programs, its simplicity began to fray at the edges.

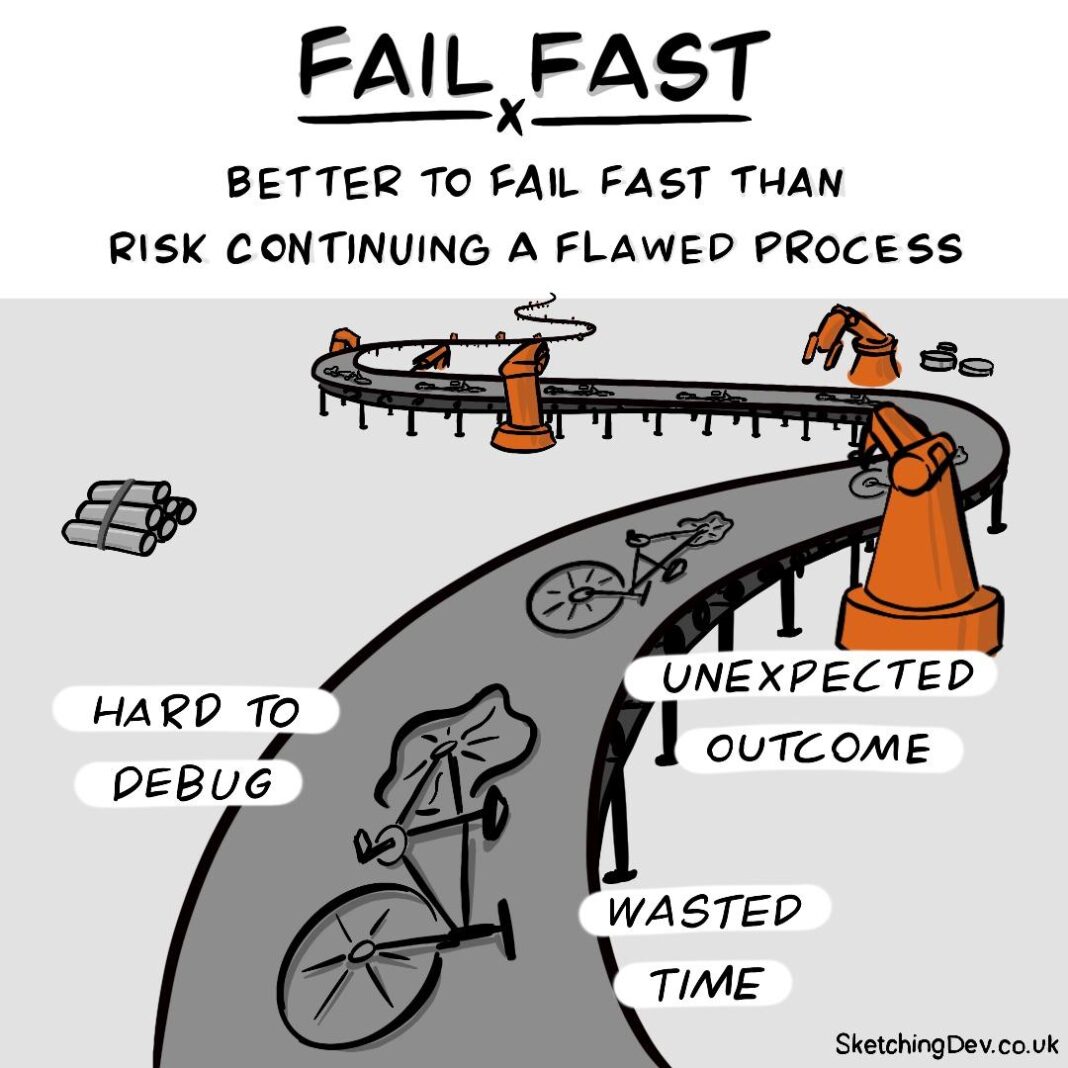

Failing fast assumes a controlled sandbox where mistakes are cheap, consequences are contained, and learning is immediate. In many real-world systems, errors cascade, costs compound, and the most important insights arrive only after sustained effort. Meanwhile, the rhetoric of rapid abandonment can discourage deep inquiry, undercut long-term commitments, and normalize discarding people and processes rather than reshaping them.

This piece explores why “fail fast” no longer reads as universally wise counsel. We’ll look at the contexts in which it misfires, the hidden costs it overlooks, and the choice attitudes-resilient experimentation, disciplined patience, and adaptive stewardship-that better suit complex, high-stakes work. The aim is not to declare the mantra dead, but to ask when it helps, when it harms, and what wiser questions we might ask in its place.

The Myth of Momentum: Why Failing Fast Can Mask Fragile Assumptions

Ever as lean startups popularized the mantra of pivoting quickly, teams have treated rapid iteration as proof of progress. But chasing early pivots frequently enough produces momentum without depth: a flurry of experiments that feel productive as something changed, not because underlying beliefs were validated. What looks like agility can be nothing more than noise amplification-small, fragile hypotheses being nudged into different shapes to avoid confronting core flaws.

When speed becomes the primary metric, durable learning gets sidelined. Short experiments can conceal brittle assumptions about customers, value, or distribution, and the resulting “wins” frequently reflect clever measurement rather than real product-market fit. To reveal those hidden cracks,teams must pair fast cycles with intentional checks: broad sampling,longer time horizons for retention,and explicit guardrails that surface systemic risks instead of hiding them behind the illusion of constant motion.

- Momentum signals that deserve scrutiny

- Fragile assumptions frequently enough masked by early optimism

- Durability tests to add to fast experiments

| Signal | Hidden Risk |

|---|---|

| Spike in signups | Paid, non-engaged users |

| High click-through | Poor retention |

| Positive interviews | Selection bias |

The Human toll of Rapid Failure Cycles and how They Erode Learning

Like a treadmill tuned to ever-faster speeds, rapid failure cycles can turn experimentation into endurance sport-one where the human body and mind pay. The cost is tangible: decision fatigue from constant context-switching, a creeping sense of shame when “failure” is treated as disposable, and eroded mentorship as seniors rush to ship rather than teach. Emotional debt accumulates when teams are asked to iterate without time to process what went wrong; people stop learning and start surviving.

- Exhaustion and burnout

- Shame, silence, and hidden mistakes

- Shallow experiments that produce no usable insight

- Loss of institutional memory as lessons aren’t documented

When speed outranks sensemaking, the organization loses its ability to turn failure into knowledge. Speedy iterations that aren’t paired with reflection create noise, not signal-patterns go unnoticed, and the same mistakes repeat in new guises. To reclaim learning, teams must build rituals that slow the loop: block time for reflection, record experiments in simple, shareable formats, and cultivate psychological safety so people can speak honestly.

- Pause to sense-make

- Document decisions and outcomes

- protect deep work and mentorship time

- Reward synthesis, not just velocity

When Early Signals Mislead: Data Biases, Sampling Errors, and False Negatives

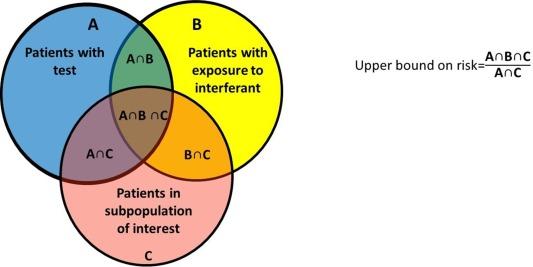

First impressions from your dashboards can feel convincing, but often they’re the product of quirks, not truth. Tiny cohorts, skewed funnels and overlooked segments turn promising early metrics into mirages; false negatives hide real opportunities and data biases amplify noise into narratives. watch for the usual culprits:

- Survivorship bias - only the successes remain visible

- Selection bias – your sample isn’t your population

- Confirmation bias – you interpret noise to fit a story

- Underpowered samples - Type II errors that mask real effects

These forces can push teams to abandon experiments or features that actually needed time or a different lens to show value.

Rather than treating the first dip or lift as gospel, build processes that respect uncertainty: longer windows, smarter controls, and clearer hypotheses. Combine quantitative fixes with qualitative checks to catch what numbers miss:

- Pre-register outcomes and stopping rules

- Use holdout cohorts and stratified sampling

- Apply Bayesian priors or sequential analysis to temper early swings

- Pair analytics with user interviews to surface hidden signals

When early signals conflict, the wiser move is not instant abandonment but calibrated patience – measure better, test broader, and let the true pattern emerge.

Designing for Sustainable Learning: Slow Experiments, Controlled Pilots, and Repeatable Measures

The shift away from “fail fast” asks us to treat learning as a garden, not an assembly line: nurture, observe, and iterate with care. Slow experiments let teams preserve context, reduce cognitive load, and capture subtler signals that crash-and-burn trials miss. In practice that means designing small, focused trials that prioritize fidelity over speed and building rhythms for reflection.

- depth over breadth – run fewer experiments, but capture richer qualitative and quantitative evidence.

- Stable conditions - keep variables controlled so outcomes are attributable and repeatable.

- Reflective cadence – schedule intentional pauses to synthesize learning before the next step.

Controlled pilots turn experiments into dependable instruments of change by establishing repeatable measures and clear handoffs: a hypothesis, a protocol, and an agreed metric. This reduces noise, helps leaders make defensible decisions, and builds organizational muscle memory for scaling what works.

- Consistent metrics - use the same measures across pilots so results can be compared.

- obvious protocol – document steps so others can reproduce the pilot reliably.

- Slow scaling – expand in deliberate increments, validating at each stage rather than leaping to full rollout.

building organizational Memory: Documentation, Feedback Loops, and Shared Accountability

The old mantra of moving fast and failing quickly often leaves organizations with a trail of forgotten experiments and little to show for the lessons learned.Without intentional mechanisms to capture context, causes, and corrective steps, each iteration can feel like a fresh experiment rather than a step in a coherent journey. Durable documentation-short, searchable, and connected to decision makers-turns isolated failures into building blocks, letting teams surface patterns, reduce repetition, and accelerate true progress.

Turning speed into sustainable learning means pairing rapid experiments with repeatable processes that preserve knowledge:

- Document decisions - record the hypothesis,metrics,trade-offs,and who signed off.

- Feedback loops - schedule follow-ups that verify assumptions and surface surprises.

- Shared accountability – make learning the team’s obligation, not the individual’s burden.

Small rituals-post-mortem summaries, one-page experiment templates, and a shared repository tagged by outcome-create a multiplying effect: fast iterations plus reliable memory equals real organizational intelligence.

Practical Alternatives to Failing Fast: Bounded Risk tests, Structured Decision Reviews, and clear Scaling Criteria

Treat experiments like controlled fires: keep them small, visible, and extinguishable. • Bounded Risk Tests mean explicit limits on time, budget, and user exposure - run a two-week feature toggle with a 1% traffic cap and a built-in kill switch rather than shipping to everyone. • Structured Decision Reviews turn intuition into accountable checkpoints: a short, documented review that scores impact, effort, and evidence before the next investment. • Clear Scaling Criteria turns hope into hard gates – predefine the metric thresholds (e.g., LTV > CAC, 30-day retention ≥ X, error rate < Y) that must be met to expand beyond the pilot.

Operationalizing these alternatives is straightforward: codify the experiment guardrails,assign a decision owner,and require a concise readout. • Checklist for each test: hypothesis, maximum exposure, measurement window, stop triggers, and a named reviewer. • Cadence: weekly quick-checks, monthly structured reviews, and a scale-or-kill decision within a fixed window. This approach preserves the speed of learning while preventing runaway costs, reputational damage, and the illusion that all failure is equally valuable.

In Summary

The call to “fail fast” served as a useful corrective when organizations were paralyzed by fear of mistakes; like a drill that cleared rubble, it made room for iteration. But as products,teams and ecosystems grow more entwined and costly,that single mantra no longer fits every situation. What we need now is not a new slogan but a more nuanced toolkit: experiments designed to protect people and customers, feedback loops that privilege depth over haste, and a culture that tolerates slow, deliberate learning as well as rapid pivots. Treat failure less as an endpoint and more as a data point-one to be interpreted, contextualized and, when possible, prevented. the wiser question may not be how fast you can fail, but how thoughtfully you can learn.